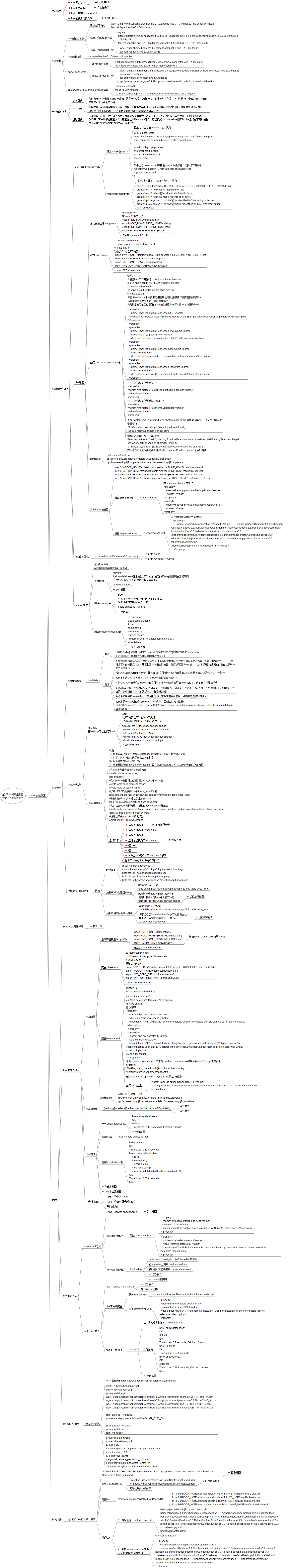

Hive 安装配置

hive的远程模式

元数据存于mysql数据库

通过yum安装mysql

yum -y install wget

wget http://repo.mysql.com/mysql-community-release-el7-5.noarch.rpm

rpm -ivh mysql-community-release-el7-5.noarch.rpm

yum install -y mysql-server

systemctl start mysqld

systemctl enable mysqld

mysql -u root

(

如果上方mysql -u root不能进入mysql>提示符,再执行下面命令:

/usr/bin/mysqladmin -u root -h mysql password 'root'

mysql -u root -p root

)

wget http://repo.mysql.com/mysql-community-release-el7-5.noarch.rpm

rpm -ivh mysql-community-release-el7-5.noarch.rpm

yum install -y mysql-server

systemctl start mysqld

systemctl enable mysqld

mysql -u root

(

如果上方mysql -u root不能进入mysql>提示符,再执行下面命令:

/usr/bin/mysqladmin -u root -h mysql password 'root'

mysql -u root -p root

)

创建hive数据库和用户

CREATE SCHEMA `hive` DEFAULT CHARACTER SET utf8mb4 COLLATE utf8mb4_bin ;

grant all on *.* to hive@'%' identified by 'hive';

grant all on *.* to hive@'localhost' identified by 'hive';

grant all on *.* to hive@'master' identified by 'hive';

grant all privileges on *.* to 'hive'@'%' identified by 'hive' with grant option;

grant all privileges on *.* to 'hive'@'master' identified by 'hive' with grant option;

flush privileges;

grant all on *.* to hive@'%' identified by 'hive';

grant all on *.* to hive@'localhost' identified by 'hive';

grant all on *.* to hive@'master' identified by 'hive';

grant all privileges on *.* to 'hive'@'%' identified by 'hive' with grant option;

grant all privileges on *.* to 'hive'@'master' identified by 'hive' with grant option;

flush privileges;

hive配置

配置 hive-env.sh

配置 hive-site.xml(master端)

说明:

1,创建linux下本地路径:mkdir /usr/local/hive/iotmp

2, 进入hive的conf目录,生成并修改hive-site.xml

cd /usr/local/hive/conf

cp ./hive-default.xml.template ./hive-site.xml

vi ./hive-site.xml

3,在hive-site.xml中找到下方相应属性修改值(使用"/"加要查找的内容)。

或者删除所有默认配置,重新添加属性

4,元数据使用前面创建好的mysql数据库,hive库,用户名和密码:hive

1,创建linux下本地路径:mkdir /usr/local/hive/iotmp

2, 进入hive的conf目录,生成并修改hive-site.xml

cd /usr/local/hive/conf

cp ./hive-default.xml.template ./hive-site.xml

vi ./hive-site.xml

3,在hive-site.xml中找到下方相应属性修改值(使用"/"加要查找的内容)。

或者删除所有默认配置,重新添加属性

4,元数据使用前面创建好的mysql数据库,hive库,用户名和密码:hive

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://master:3306/hive?useSSL=false&useUnicode=true&characterEncoding=UTF-8</value>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

<description>Driver class name for a JDBC metastore</description>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>hive</value>

<description>Username to use against metastore database</description>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>hive</value>

<description>password to use against metastore database</description>

</property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://master:3306/hive?useSSL=false&useUnicode=true&characterEncoding=UTF-8</value>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

<description>Driver class name for a JDBC metastore</description>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>hive</value>

<description>Username to use against metastore database</description>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>hive</value>

<description>password to use against metastore database</description>

</property>

<!-- 关闭元数据存储授权 -->

<property>

<name>hive.metastore.event.db.notification.api.auth</name>

<value>false</value>

</property>

<!-- 关闭元数据存储版本的验证 -->

<property>

<name>hive.metastore.schema.verification</name>

<value>false</value>

</property>

<property>

<name>hive.metastore.event.db.notification.api.auth</name>

<value>false</value>

</property>

<!-- 关闭元数据存储版本的验证 -->

<property>

<name>hive.metastore.schema.verification</name>

<value>false</value>

</property>

修改hadoop配置

ln -s $HADOOP_HOME/etc/hadoop/core-site.xml $HIVE_HOME/conf/core-site.xml

ln -s $HADOOP_HOME/etc/hadoop/hdfs-site.xml $HIVE_HOME/conf/hdfs-site.xml

ln -s $HADOOP_HOME/etc/hadoop/yarn-site.xml $HIVE_HOME/conf/yarn-site.xml

ln -s $HADOOP_HOME/etc/hadoop/mapred-site.xml $HIVE_HOME/conf/mapred-site.xml

ln -s $HADOOP_HOME/etc/hadoop/hdfs-site.xml $HIVE_HOME/conf/hdfs-site.xml

ln -s $HADOOP_HOME/etc/hadoop/yarn-site.xml $HIVE_HOME/conf/yarn-site.xml

ln -s $HADOOP_HOME/etc/hadoop/mapred-site.xml $HIVE_HOME/conf/mapred-site.xml

编辑mapred-site.xml

vi ./mapred-site.xml

在</configuration>之前添加:

<property>

<name>mapreduce.application.classpath</name> <value>/usr/local/hadoop-3.3.1/etc/hadoop:/usr/local/hadoop-3.3.1/share/hadoop/common/lib/*:/usr/local/hadoop-3.3.1/share/hadoop/common/*:/usr/local/hadoop-3.3.1/share/hadoop/hdfs:/usr/local/hadoop-3.3

.1/share/hadoop/hdfs/lib/*:/usr/local/hadoop-3.3.1/share/hadoop/hdfs/*:/usr/local/hadoop-3.3.1/share/hadoop/mapreduce/*:/usr/local/hadoop-3.3.1/share/hadoop/yarn:/usr/local/hadoop-3.3.1/share/hadoop/yarn/lib/*

:/usr/local/hadoop-3.3.1/share/hadoop/yarn/*</value>

</property>

<property>

<name>mapreduce.application.classpath</name> <value>/usr/local/hadoop-3.3.1/etc/hadoop:/usr/local/hadoop-3.3.1/share/hadoop/common/lib/*:/usr/local/hadoop-3.3.1/share/hadoop/common/*:/usr/local/hadoop-3.3.1/share/hadoop/hdfs:/usr/local/hadoop-3.3

.1/share/hadoop/hdfs/lib/*:/usr/local/hadoop-3.3.1/share/hadoop/hdfs/*:/usr/local/hadoop-3.3.1/share/hadoop/mapreduce/*:/usr/local/hadoop-3.3.1/share/hadoop/yarn:/usr/local/hadoop-3.3.1/share/hadoop/yarn/lib/*

:/usr/local/hadoop-3.3.1/share/hadoop/yarn/*</value>

</property>

hive使用

hive加载数据

hive词频统计

准备数据

将hadoop日志上传到hdfs

将hadoop日志上传到hdfs

进行词频统计

说明:

1,如果前面已经使用 create database myname;下面可以跳过执行该行

2,以下myname改为同学自已名字的拼音

3,以下脚本在hive命令行进行

4,若重复执行create table wordcount,需在wordcount后加上_1,_2等避免表已存在问题

1,如果前面已经使用 create database myname;下面可以跳过执行该行

2,以下myname改为同学自已名字的拼音

3,以下脚本在hive命令行进行

4,若重复执行create table wordcount,需在wordcount后加上_1,_2等避免表已存在问题

#在hive上创建创建myname数据库

create database myname;

use myname;

#在myname数据训上创建创建docs_tmp和docs表

create table docs_tmp(line string);

create table docs(line string);

#加载HDFS的数据到Hive的docs_tmp临时表

load data inpath '/user/hadoop/hadooplogs' overwrite into table docs_tmp;

#从临时表docs_tmp添加到正式表docs

INSERT into docs select line from docs_tmp;

#从正式表docs分析词频,将结果存入wordcount结果表

create table wordcount as (select word, count(1) as count from (select explode(split(line, ' ')) as word from docs) w group by word order by word);

#统计结果表wordcount的记录数

select count(*) from wordcount;

create database myname;

use myname;

#在myname数据训上创建创建docs_tmp和docs表

create table docs_tmp(line string);

create table docs(line string);

#加载HDFS的数据到Hive的docs_tmp临时表

load data inpath '/user/hadoop/hadooplogs' overwrite into table docs_tmp;

#从临时表docs_tmp添加到正式表docs

INSERT into docs select line from docs_tmp;

#从正式表docs分析词频,将结果存入wordcount结果表

create table wordcount as (select word, count(1) as count from (select explode(split(line, ' ')) as word from docs) w group by word order by word);

#统计结果表wordcount的记录数

select count(*) from wordcount;

参考

hive的内嵌模式

hive配置

配置 hive-env.sh

配置hive-site.xml

cd /usr/local/hive/conf

cp ./hive-default.xml.template ./hive-site.xml

vi ./hive-site.xml

修改内容:

<property>

<name>hive.metastore.uris</name>

<value>/user/hive/warehouse</value>

<description>Thrift URI for the remote metastore. Used by metastore client to connect to remote metastore.</description>

</property>

<property>

<name>hive.exec.scratchdir</name>

<value>/tmp/hive</value>

<description>HDFS root scratch dir for Hive jobs which gets created with write all (733) permission. For each connecting user, an HDFS scratch dir: ${hive.exec.scratchdir}/<username> is created, with ${hive.scratch.dir.permis

sion}.</description>

</property>

查询 /system:java.io.tmpdir 和查询 /system:user.name 并使用 n查询一下处,发现有多处

全局替换

:%s#${system:java.io.tmpdir}#/usr/local/hive/iotmp#g

:%s#${system:user.name}#hadoop#g

cp ./hive-default.xml.template ./hive-site.xml

vi ./hive-site.xml

修改内容:

<property>

<name>hive.metastore.uris</name>

<value>/user/hive/warehouse</value>

<description>Thrift URI for the remote metastore. Used by metastore client to connect to remote metastore.</description>

</property>

<property>

<name>hive.exec.scratchdir</name>

<value>/tmp/hive</value>

<description>HDFS root scratch dir for Hive jobs which gets created with write all (733) permission. For each connecting user, an HDFS scratch dir: ${hive.exec.scratchdir}/<username> is created, with ${hive.scratch.dir.permis

sion}.</description>

</property>

查询 /system:java.io.tmpdir 和查询 /system:user.name 并使用 n查询一下处,发现有多处

全局替换

:%s#${system:java.io.tmpdir}#/usr/local/hive/iotmp#g

:%s#${system:user.name}#hadoop#g

hive服务方式

mysql安装参考

官方rpm安装

mkdir -p /home/hadoop/mysql

cd /home/hadoop/mysql

yum -y install wget

wget -c https://cdn.mysql.com/archives/mysql-5.7/mysql-community-client-5.7.38-1.el7.x86_64.rpm

wget -c https://cdn.mysql.com/archives/mysql-5.7/mysql-community-common-5.7.38-1.el7.x86_64.rpm

wget -c https://cdn.mysql.com/archives/mysql-5.7/mysql-community-libs-5.7.38-1.el7.x86_64.rpm

wget -c https://cdn.mysql.com/archives/mysql-5.7/mysql-community-server-5.7.38-1.el7.x86_64.rpm

rpm -qa|grep -i mariadb

rpm -e --nodeps mariadb-libs-5.5.60-1.el7_5.x86_64

yum -y install net-tools

yum -y install perl

rpm -ivh mysql*

cd /home/hadoop/mysql

yum -y install wget

wget -c https://cdn.mysql.com/archives/mysql-5.7/mysql-community-client-5.7.38-1.el7.x86_64.rpm

wget -c https://cdn.mysql.com/archives/mysql-5.7/mysql-community-common-5.7.38-1.el7.x86_64.rpm

wget -c https://cdn.mysql.com/archives/mysql-5.7/mysql-community-libs-5.7.38-1.el7.x86_64.rpm

wget -c https://cdn.mysql.com/archives/mysql-5.7/mysql-community-server-5.7.38-1.el7.x86_64.rpm

rpm -qa|grep -i mariadb

rpm -e --nodeps mariadb-libs-5.5.60-1.el7_5.x86_64

yum -y install net-tools

yum -y install perl

rpm -ivh mysql*

常见问题

运行hive词频统计异常

ql.Driver: FAILED: Execution Error, return code 2 from org.apache.hadoop.hive.ql.exec.mr.MapRedTask MapReduce Jobs Launched:

分析,查看yarn日志

处理二:

1、首先运行:hadoop classpath

[hadoop@master sbin]$ hadoop classpath

/usr/local/hadoop-3.3.1/etc/hadoop:/usr/local/hadoop-3.3.1/share/hadoop/common/lib/*:/usr/local/hadoop-3.3.1/share/hadoop/common/*:/usr/local/hadoop-3.3.1/share/hadoop/hdfs:/usr/local/hadoop-3.3.1/share/hadoop/hdfs/lib/*:/usr/local/hadoop-3.3.1/share/hadoop/hdfs/*:/usr/local/hadoop-3.3.1/share/hadoop/mapreduce/*:/usr/local/hadoop-3.3.1/share/hadoop/yarn:/usr/local/hadoop-3.3.1/share/hadoop/yarn/lib/*:/usr/local/hadoop-3.3.1/share/hadoop/yarn/*

[hadoop@master sbin]$

/usr/local/hadoop-3.3.1/etc/hadoop:/usr/local/hadoop-3.3.1/share/hadoop/common/lib/*:/usr/local/hadoop-3.3.1/share/hadoop/common/*:/usr/local/hadoop-3.3.1/share/hadoop/hdfs:/usr/local/hadoop-3.3.1/share/hadoop/hdfs/lib/*:/usr/local/hadoop-3.3.1/share/hadoop/hdfs/*:/usr/local/hadoop-3.3.1/share/hadoop/mapreduce/*:/usr/local/hadoop-3.3.1/share/hadoop/yarn:/usr/local/hadoop-3.3.1/share/hadoop/yarn/lib/*:/usr/local/hadoop-3.3.1/share/hadoop/yarn/*

[hadoop@master sbin]$

2、编辑mapred-site.xml文件

(将1中的结果添加进来)

(将1中的结果添加进来)

<property>

<name>mapreduce.application.classpath</name>

<value>/usr/local/hadoop-3.3.1/etc/hadoop:/usr/local/hadoop-3.3.1/share/hadoop/common/lib/*:/usr/local/hadoop-3.3.1/share/hadoop/common/*:/usr/local/hadoop-3.3.1/share/hadoop/hdfs:/usr/local/hadoop-3.3

.1/share/hadoop/hdfs/lib/*:/usr/local/hadoop-3.3.1/share/hadoop/hdfs/*:/usr/local/hadoop-3.3.1/share/hadoop/mapreduce/*:/usr/local/hadoop-3.3.1/share/hadoop/yarn:/usr/local/hadoop-3.3.1/share/hadoop/yarn/lib/*

:/usr/local/hadoop-3.3.1/share/hadoop/yarn/*</value>

</property>

<name>mapreduce.application.classpath</name>

<value>/usr/local/hadoop-3.3.1/etc/hadoop:/usr/local/hadoop-3.3.1/share/hadoop/common/lib/*:/usr/local/hadoop-3.3.1/share/hadoop/common/*:/usr/local/hadoop-3.3.1/share/hadoop/hdfs:/usr/local/hadoop-3.3

.1/share/hadoop/hdfs/lib/*:/usr/local/hadoop-3.3.1/share/hadoop/hdfs/*:/usr/local/hadoop-3.3.1/share/hadoop/mapreduce/*:/usr/local/hadoop-3.3.1/share/hadoop/yarn:/usr/local/hadoop-3.3.1/share/hadoop/yarn/lib/*

:/usr/local/hadoop-3.3.1/share/hadoop/yarn/*</value>

</property>